There are several different branching strategies to control and manage the source code of your projects. In the theory I have read of many possible strategies, many are defined and others are customized, and the one that I had never thought of implementing is "Branches by environment", although when I implemented it, I customized it in order to maintain, as best as possible, the development strategies used in the project and it mutated as time went by (in order to achieve continuous integration and delivery).

I entered 2 years since the project started, if they had used GIT/Mercurial, the story would have been very different.

Next I will make a small introduction to some known strategies and then to the one I ended up using to work in a complex and big project (since the previous ones tested and other mixes, didn't give me results).

The "mainline" strategy is commonly used, where the main branch is in charge of containing the "stable" code of the current version of the project and tagging the versions that were added (merge-commit) and from it derive temporary branches for bugfixing (maintenance and bugs) and temporary branches for development.

In practice this is the most common strategy and has the advantage:

-Few branches

-Less merge

-Quick error detection

-Less bugs

-Facility to integrate and version

-Allows Clean Continuous Integration

-Feedback and quick status

Although by disadvantage we can also say that:

-think we have a stable line and that it's a really stable version (if there are no TDD mechanisms or oiled testing, this will never happen)

-For developers in large teams it's a headache, they must continually upload and download code from other developers' changes.

-When working this way it is common to remove or hide functionality (with switches, variables or flags), which can generate dirty or obsolete code and then no one takes it back or removes it.

-If there is continuous integration and/or continuous delivery, the team should be constantly checking the code and waiting for the build errors of the rest of the team to be corrected.

Others take the option of branching by release or by feature. In these cases we can say that they are similar, since both try to freeze the version to be implemented and/or the new developments in parallel branches and then integrate (many branches and in some cases the branches of release fixes or features appear and make the merging more extensive). This strategy is useful if the features to be versioned are defined with planning (it requires high planning and delivery predefinition), so it would work if agile methodologies are used for development, although some people think they use them but it still happens that it is not applied as it should be. This makes it a big headache if you don't define sprints correctly, if you don't version the functionality or if you don't work on the bugfixes first rather than developing on code that needs to be adjusted. This makes integration and continuous deployment extremely complex and exaggerated in quantity (not to mention testing!).

And here is the part you can identify with if it is a project with more than 30 people working on it, there are extensive features, dynamic releases, parallel bugfixing, several release delivery paths, strange development methodologies (none in particular) and poor testing management, among others.

After struggling with trying to impose a normal branching strategy, I was forced to change "my" branching strategy to "branching by environment".

In this project with several pre-productive environments I decided to implement the strategy of "branching by environment" (you can google the different cases that can be implemented, it has some things in common with "branching by Quality").

“Much like feature and bug-fix branches, environment branches make it easy for you to separate your in-progress code from your stable code. Using environment branches and deploying from them means you will always know exactly what code is running on your servers in each of your environments.”

“In order to keep your environment branches in sync with the environments, it’s a best practice to only execute a merge into an environment branch at the time you intend to deploy. If you complete a merge without deploying, your environment branch will be out of sync with your actual production environment.”

“Best Practices with Environment Branches:

Use your repository’s default working branch as your “stable” branch.

Create a branch for each environment, including staging and production.

Never merge into an environment branch unless you are ready to deploy to that environment.

Perform a diff between branches before merging—this can help prevent merging something that wasn’t intended, and can also help with writing release notes.

Merges should only flow in one direction: first from feature to staging for testing; then from feature to stable once tested; then from stable to production to ship.”

http://guides.beanstalkapp.com/version-control/branching-best-practices.html#branches-environments

“Pattern: Branch per Environment”

A situation often encountered, especially with software systems being developed for “in-house” use, is the requirement to migrate software into successive different environments. Typically this starts with a development environment (sometimes several different ones, allowing for segregation between work by different teams) and moves on into system test, UAT (user acceptance test) and production environments.

By modelling each environment with a parallel “development” branch in the software repository, it is possible to harness the CM tool to assist with:

Packaging and deployment of migrations between environments;

Status accounting of current configuration in each environment;

Access control to different environments; and

Traceability of changes as they move through the environments (for instance, so that testers which fixes and features are available for test).

http://www.methodsandtools.com/archive/archive.php?id=12

In my particular case, I mutated and customized it, to be able to cover the difficulties of versioning that existed and to be able to implement a strategy of integration and continuous delivery.

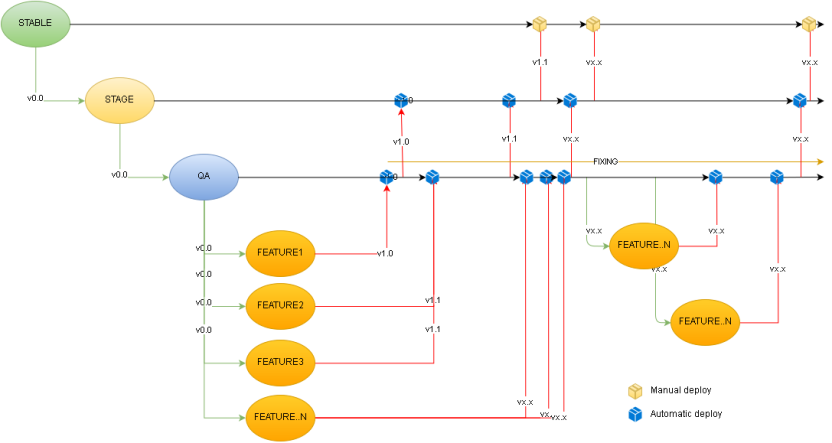

In 1st place we must create a "stable" or "main" branch that contains a stable version or the 1st productive version. This will be the starting point of the branching. Then from the stable branch, we must create as many branch children of this as preproductive environments we have. Then we will create a branch "QA" to unify the versioning of the features that want to be integrated. From the "QA" branch we will create as many features branch as we need and a fixes branch to work with the bugfixing. The idea is the following:

-When we need a new development we create a new brach (for example: Feature1) from the QA branch. -If we need to do bugfixing we use the fixes branch. -When we want to test a version we select the code we need and merge it to QA. This would be a trial version (not a stable version). QA will always have the unified version it wants to implement in the future. In case you want to remove functionality, it is done in QA, in case you want to add functionality or fixes, they are integrated to QA. Then the merge branch features are removed. -QA is implemented in a QA environment (having the exact code of the environment allows a continuous pure delivery). -QA is tested and adjusted as the testing state is returned (in theory we should not have a version to adjust, unless the development is of bad levels). -Once QA is approved, it is merge to Stage, the same happens as QA and when the version is approved and negotiated it is merge to Stable. -The same happens with the other branches.

Benefits:

-Delivery continues quickly.

-Version control to be implemented.

-Early detection of bugs, bad testing, bad merge, bad implementation, bad planning.

-Isolation and atomization of developments and fixing.

-Centralization of the version.

-What is implemented is what is in the branch (having the same branch in different environments usually makes the team assume that the error is an implementation error and not a data-code error).

-Integration and continuous delivery pure and isolated.

-Healthy Rollback (in my case it never failed and the integration could always be undone and re-generated without problems, from more than 5 integrated branches).

-Incremental integration and with possibilities to integrate from any branch.

-Delivery to automated environments and much faster.

-The code upload by the team is isolated and does not break versions (something very common in other strategies). Although all the responsibility falls on the person in charge of the merge. If the development code is not well developed it drags errors, if the code to be integrated is badly planned, it integrates to functionality that has inconsistent functionality or that has fixes that changed the functionality or fixes that were not integrated yet.

But this strategy also has its cons, but they were milder than with another strategy: -A lot of flexibility, which on the one hand is good, but on the other hand is abusive (it over demands flexibility). It is avoided by curbing incongruent requests. -QA gets a lot of bugs and they increase if the feature and development branches are kept for a long time (more than 1-2 weeks = bad planning). It is avoided by planning in the short term and with short or staged sprints or features (evolutionary stages should be, that is, until one stage is stable and productive, it is not continued with the next one). -If in QA not all bugs are caught they go to Stage (although there is the benefit of one more stage of testing and fixing). This is avoided by fixing in QA and with exhaustive testing. -Many pre-production branches require more merge or extreme merge. This is avoided by having only one pre-production environment. -Functionality errors due to integrations that are not requested based on continuous development (a fix is integrated after several developments and breaks or changes the original development or functionality causing functional errors). It is avoided with better planning and management. -If developments are not integrated quickly, they cause a functionality gap and a lack of updating of the development, and sometimes it is requested to merge productive code to branches that are relegated or have excessive time. -If fixes and development are integrated with modified common functionality, it generates more fixing, testing, integration and delivery. It is avoided with better planning.

It's not ideal, but this strategy has relieved me of a lot of headaches.

And finally... many believe that automating the production process is the ideal... I don't agree from experience and even less in the case that I just told you... but, maybe in the future I will write a post about what strategy I used to achieve it... hehe

Using automatic deployments makes your Production environment very vulnerable. Please don’t do that, always deploy to production manually. http://guides.beanstalkapp.com/deployments/best-practices.html